When Linus Torvalds created Git in 2005, he solved a very human problem: how do distributed teams of engineers coordinate changes to a shared codebase without stepping on each other’s feet? The design was elegant, the model brilliant – and entirely anthropocentric. Every concept in Git, from commits to branches to pull requests, was designed to reflect human reasoning about software change.

Nobody thought about machines. Nobody had to.

GitHub today: 420 million repositories – the world’s largest unintentional AI training dataset, built by humans for humans, read by machines for everything.

The Unintended Consequence of 20 Years of Open Source

Fast forward to today. GitHub hosts over 420 million repositories. The platform has become, without anyone planning it that way, the single largest structured dataset of human reasoning about software in existence. Not raw text, not unstructured web crawls – but versioned, annotated, context-rich artifacts of how engineers think, communicate, decide, and build.

When OpenAI trained Codex, when DeepMind built AlphaCode, when Anthropic trained Claude – they all fed on this corpus. The commit messages, the PR discussions, the inline comments, the issue threads, the README files. Git history is, quite literally, the autobiography of software development, and AI learned to read it before most organizations realized what they were giving away.

The irony is profound. A version control system designed for human collaboration became the substrate for training non-human intelligence. Git was the library. GitHub was the librarian. And the AI models were the quiet students who read everything, remembered everything, and said nothing.

What Does a Machine Actually Learn from a Repository?

This is where it gets interesting – and where most CTO conversations I have still miss the point. The common assumption is that AI learns “code” from GitHub. That is technically true but deeply incomplete. What AI systems actually absorb is something far richer: the relationship between intent and implementation.

A commit message that reads “fix edge case in authentication flow when user has expired token” paired with a fifteen-line diff teaches a model not just syntax, but causality. The issue thread that preceded it, the review comments that shaped it, the test that was added afterward – together, they represent a chain of engineering reasoning that no textbook ever captured at scale.

This is fundamentally different from learning from documentation. Documentation describes what code does. Repositories reveal how engineers think about what code should do, why it changes, and under what conditions decisions get revisited. The difference is the difference between reading a law and watching a courtroom.

The Next Evolution: Repos as AI-Native Artifacts

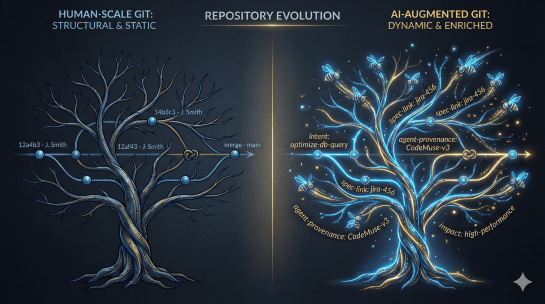

Here is where we stand at an inflection point that most engineering organizations have not yet grasped. If repositories are already the de facto training substrate for AI, the logical next step is to design repositories with that fact in mind. Not reactively, not accidentally – but intentionally.

In the coming years, we will see repositories evolve from pure version control archives into structured knowledge bases that explicitly address AI agents as consumers. This means several concrete developments that are already beginning to emerge in practice.

The first is the emergence of a dedicated specification layer. Today, most codebases contain implicit intent buried in comments, naming conventions, and tribal knowledge that lives only in the heads of the engineers who wrote it. Tomorrow’s repositories will carry explicit machine-readable specifications – linked directly from the code they describe – that articulate not just what a module does, but what it is trying to achieve and what constraints govern its evolution. Formats like OpenSpec and similar frameworks are early experiments in this direction.

The second shift is what might be called the Intent Layer. Beyond the specification of individual components, future repositories will carry structured metadata that describes the reasoning behind architectural decisions. Why was this approach chosen over the alternatives? What trade-offs were consciously accepted? What assumptions does this design rely on? This is the kind of context that AI agents need to reason correctly about a codebase – not just to generate code that compiles, but to generate code that fits.

The third development is the rise of agent-aware commit protocols. If AI agents are both reading and writing to repositories – which they already are in many of our development pipelines today – the commit structure itself needs to evolve. Automated commits should carry provenance metadata: which model generated this, from which specification, against which test harness, with what confidence. Human commits will increasingly need corresponding context flags that distinguish deliberate design choices from pragmatic workarounds.

The Strategic Implication Nobody Is Talking About

There is a competitive dimension here that deserves more executive attention than it currently receives. Organizations that deliberately enrich their repositories with machine-readable intent and specification data are not just improving their own AI-assisted development workflows. They are producing higher-quality training data for the next generation of models. If open-source development continues to feed the pre-training pipelines of frontier AI systems, then the quality of the reasoning encoded in public repositories will shape the quality of the AI that the entire industry relies on.

This creates an asymmetry: companies that treat their internal codebases as structured knowledge assets – not just as source code archives – will build internal AI capabilities that reflect higher-quality reasoning. The gap between organizations that have thought seriously about repository architecture as an AI substrate and those that have not will become a measurable capability difference within this decade.

Git was never built for machines. But machines have made themselves at home, and now the question is whether we redesign the house accordingly.

The answer, for any organization serious about AI-driven development, is yes. And the time to start is now – not when the next model generation arrives, but before it does, while there is still time to shape what it learns from you.

This post is part of a series on the structural shifts in softwawe development brought on by AI integration. Previous entries covered specification-driven development and multi-agent orchestration in enterprise codebases.