How we ended up here

Over the last three years, something has shifted in enterprise IT that I did not fully anticipate, even after thirty-five years in this industry. The tools we use to build software have not just improved — they have fundamentally changed what it means to be a developer, an architect, or a platform engineer. Large frontier models from major AI laboratories have moved from impressive demonstrations to daily production instruments. And with that shift, the center of gravity in software development is moving from code to specification.

From Writing Code to Owning the Lifecycle

When I started in this business, a good developer was someone who could write tight, efficient code. Over the decades, the role expanded — version control, testing, deployment, monitoring. DevOps made developers responsible for the full lifecycle. CI/CD pipelines, Infrastructure as Code, observability — all of this became part of what a software professional must understand.

Now another expansion is happening. Advanced coding systems from major AI labs can generate, refactor, test, and document code at a speed and consistency that no human can match on pure throughput. I have seen this in my own teams. A task that took a senior developer two days — writing integration tests for a legacy API — was drafted in twenty minutes by a reasoning system, reviewed and adjusted by the developer in another hour.

The developer did not become unnecessary. The developer became the person who decided what the tests should validate, how edge cases map to business logic, and whether the generated output was actually correct. The skill shifted from typing to thinking.

Specification-Driven Development: The Real Asset Is Not the Code

This is where something genuinely new is emerging. If state-of-the-art foundation models can produce working code from well-written instructions, then the quality of those instructions becomes the competitive advantage. Not the code. The specification.

I have started calling this Specification-Driven Development, and I am not alone. Methodologies like BMAD and OpenSpec are formalizing what many of us felt intuitively: the person who writes the best specification wins. Not the person who types the fastest. Not the person who memorizes the most framework APIs.

In my own practice, I have seen projects where a precise, well-structured specification document — describing behavior, constraints, interfaces, error handling, and security boundaries — produced better results through AI-assisted generation than a team of four developers working from a vague user story. The specification became the actual product asset. The code became a derivative. Reproducible, replaceable, regeneratable.

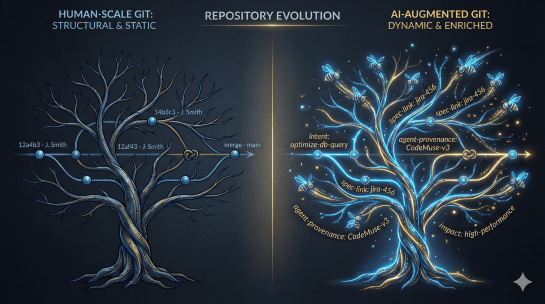

This is a profound change in how we must think about software ownership. Configuration management, version control, review processes — all of these must now apply to specifications with the same rigor we applied to source code for decades.

The Risk of Letting the Machine Think for You

I would be dishonest if I did not address the danger. Over-reliance on new generation reasoning systems is real and I have witnessed it. Junior developers accepting generated code without understanding what it does. Architects letting AI propose system boundaries without questioning whether the decomposition fits the organizational reality. Security engineers trusting AI-generated policies without tracing them back to actual threat models.

AI amplifies capability. It does not replace responsibility. Every generated artifact needs a human who understands why it exists, what it should do, and what happens when it fails. The moment we lose that, we do not have automation — we have negligence with extra steps.

The skill requirements are shifting hard toward validation, architectural reasoning, and critical thinking. These are not new skills. They are the skills we always said mattered most but rarely prioritized in hiring because we were too busy looking for people who knew specific frameworks.

AI Agents in the Enterprise: Less Applications, More Workflows

Beyond development, the transformation is reshaping how enterprises operate at every level. In my current environment, I see a clear pattern: traditional applications are being decomposed into AI-driven agent workflows. Instead of a monolithic reporting tool, we now have intelligent agents that collect data across systems, apply contextual reasoning, and surface decisions — not dashboards.

The result is less management overhead and more focus on the actual core of the task. A procurement specialist no longer spends hours consolidating supplier data from three systems. An agent does that. The specialist spends time on negotiation strategy, relationship assessment, risk evaluation — the things that require human judgment and experience.

Better decisions through data is not a new promise. What is new is that the massive amount of data enterprises sit on can now actually be processed contextually by frontier models, not just aggregated into charts that nobody reads. The data becomes actionable because the reasoning layer between raw information and human decision has become genuinely capable.

I have seen this reduce decision latency in operational contexts by days. Not because people were slow before, but because the data preparation work that preceded every decision was slow. That bottleneck is dissolving.

The Psychological Weight of Constant Adaptation

What I do not see discussed enough is the pressure this puts on people. Every six months, the capabilities of these systems leap forward. What was impossible last year is routine today. For a forty-year-old platform engineer who spent a decade mastering Kubernetes and Terraform, being told that the next generation of infrastructure management might look entirely different is not exciting. It is exhausting.

I feel this myself. After thirty-five years, I still spend evenings reading, testing, adjusting my mental models. Not because I enjoy the pressure, but because the alternative — becoming irrelevant — is worse. And I say this as someone who genuinely finds this technology fascinating. For colleagues who are less enthusiastic about constant change, the psychological burden is real.

Organizations that do not acknowledge this — that simply announce the next AI initiative without investing in their people’s learning capacity and emotional resilience — will lose their best engineers. Not to competitors. To burnout.

So What Changes for People?

The honest answer is: almost everything about the daily work, but nothing about what makes a good professional. Critical thinking, accountability, the ability to translate business needs into precise technical requirements — these have always been the core. They just were hidden behind the noise of implementation details.

What changes is that the implementation layer is increasingly handled by advanced AI systems. The human role moves upstream — to specification, validation, architectural decision-making, and ethical judgment. Developers become specification authors and quality gatekeepers. Platform engineers become orchestrators of AI-augmented infrastructure. Security engineers become the last line of reasoning before automated systems act on sensitive boundaries.

The code is becoming a byproduct. The specification is becoming the asset. And the people who understand this shift — who invest in learning how to instruct, constrain, and validate machine-generated output — will define the next era of enterprise technology. Not the machines alone. Never the machines alone.